What is an AI agent?

An AI agent is an autonomous software entity designed to perform tasks by perceiving its environment, processing information, and taking actions to achieve specific goals. An AI agent typically comprises three core components:

- Intelligence: The large language model (LLM) that drives the agent’s cognitive capabilities, enabling it to understand and generate human-like text. This component is usually guided by a system prompt that defines the agent’s goals and the constraints it must follow.

- Knowledge: The domain-specific expertise and data that the agent leverages to make informed decisions and take action. Agents utilize this knowledge base as context, drawing on past experiences and relevant data to guide their choices.

- Tools: A suite of specialized tools that extend the agent’s abilities, allowing it to efficiently handle a variety of tasks. These tools can include API calls, executable code, or other services that enable the agent to complete its assigned tasks.

What are the three core components of an AI agent?

What is RAG?

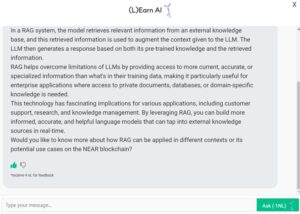

Retrieval-Augmented Generation (RAG) is an AI technique that enhances large language models (LLMs) by integrating relevant information from external knowledge bases. Through semantic similarity calculations, RAG retrieves document chunks from a vector database, where these documents are stored as vector representations. This process reduces the generation of factually incorrect content, significantly improving the reliability of LLM outputs.\cite{RAG}

A RAG system consists of two core components: the vector database and the retriever. The vector database holds document chunks in vector form, while the retriever calculates semantic similarity between these chunks and user queries. The more similar a chunk is to the query, the more relevant it is considered, and it is then included as context for the LLM. This setup allows RAG to dynamically update an LLM’s knowledge base without the need for retraining, effectively addressing knowledge gaps in the model’s training data.

The RAG pipeline operates by augmenting a user’s prompt with the most relevant retrieved text. The retriever fetches the necessary information from the vector database and injects it into the prompt, providing the LLM with additional context. This process not only enhances the accuracy and relevance of responses but also makes RAG a crucial technology in enabling AI agents to work with real-time data, making them more adaptable and effective in practical applications.

How does Retrieval-Augmented Generation (RAG) improve LLM responses?

What is Agent Memory?

AI agents, by default, are designed to remember only the current workflow, with their memory typically constrained by a maximum token limit. This means they can retain context temporarily within a session, but once the session ends or the token limit is reached, the context is lost. Achieving long-term memory across workflows—and sometimes even across different users or organizations—requires a more sophisticated approach. This involves explicitly committing important information to memory and retrieving it when needed.

Agent Memory with blockchain:

XTrace – A Secure AI Agent Knowledge & Memory Protocol for Collective Intelligence – will leverage blockchain as the permission and integrity layer for agent memory, ensuring that only the agent’s owner has access to stored knowledge. Blockchain is especially useful for this long persistent storage as XTrace provides commitment proof for the integrity of both the data layer and integrity of the retrieval process. The agent memory will be securely stored within XTrace’s privacy-preserving RAG framework, enabling privacy, portability and sharability. This approach provides several key use cases:

Stateful Decentralized Autonomous Agents:

- XTrace can act as a reliable data availability layer for autonomous agents operating within Trusted Execution Environments (TEEs). Even if a TEE instance goes offline or if users want to transfer knowledge acquired by the agents, they can seamlessly spawn new agents with the stored network, ensuring continuity and operational resilience.

XTrace Agent Collaborative Network:

- XTrace enables AI agents to access and inherit knowledge from other agents within the network, fostering seamless collaboration and eliminating redundant processing. This shared memory system allows agents to collectively improve decision-making and problem-solving capabilities without compromising data ownership or privacy.

XTrace Agent Sandbox Test:

- XTrace provides a secure sandbox environment for AI agent developers to safely test and deploy their agents. This sandbox acts as a honeypot to detect and mitigate prompt injection attacks before agents are deployed in real-world applications. Users can define AI guardrails within XTrace, such as restricting agents from mentioning competitor names, discussing political topics, or leaking sensitive key phrases. These guardrails can be enforced through smart contracts, allowing external parties to challenge the agents with potentially malicious prompts. If a prompt successfully bypasses the defined safeguards, the smart contract can trigger a bounty release, incentivizing adversarial testing. Unlike conventional approaches, XTrace agents retain memory of past attack attempts, enabling them to autonomously learn and adapt to new threats over time. Following the sandbox testing phase, agents carry forward a comprehensive memory of detected malicious prompts, enhancing their resilience against similar attacks in future deployments.

How to create a Personalized AI agent?

To create an AI agent with XTrace, there are three main steps to follow:

- Define the Purpose: Determine the specific tasks and goals the agent will accomplish.

- Choose the AI Model: Select a suitable LLM or other machine learning models that align with the agent’s requirements.

- Gather and Structure Knowledge: Collect domain-specific data and organize it in a way that the agent can efficiently use.

- Develop Tools and Integrations: Incorporate APIs, databases, or other services that the agent may need to interact with.

How to create a Private Personalized AI agent with XTrace?

XTrace can serve as the data connection layer between the user and the AI agents. Users will be able to securely share data from various apps into the system to create an AI agent that is aware of the user’s system. By leveraging XTrace’s encrypted storage and access control mechanisms, AI agents can be personalized without compromising user privacy. Key features include:

- Seamless Data Integration: Aggregating data from multiple sources securely.

- Granular Access Control: Ensuring only authorized AI agents can access specific data.

- Privacy-Preserving Computation: Enabling AI agents to learn from user data without exposing it.

- Automated Insights: Leveraging AI to provide personalized recommendations based on securely stored data.

- User Ownership: Empowering users with full control over their data and how it is used.

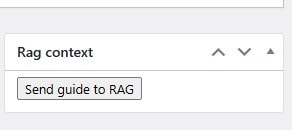

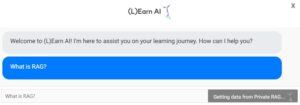

How do we use XTrace private RAG for (L)Earn AI🕺?

- We send learning materials in LLM friendly format to LNC RAG at XTrace

- Once (L)Earn AI🕺 gets the question, first it talks to private RAG and retrieve relevant information

- The LLM hosted at NEAR AI infrastructure generates a response based on both its pre-trained knowledge and the retrieved information!

- Learners are encouraged to provide feedback and get 4nLEARNs to improve (L)Earn AI🕺 to work better for NEAR community!

Updated: February 24, 2025

Top comment

The XTrace can serve as the data connection layer, facilitating communication between users and AI agents.

I'm excited to see how XTrace private RAG is being utilized to enhance the (L)Earn AI experience! The idea of sending learning materials in an LLM-friendly format to the RAG and then retrieving relevant information to inform AI responses is genius. I'm curious to know more about the type of feedback learners are encouraged to provide and how that feedback is used to improve the AI. Is there a way to track the progress and effectiveness of the 4nLEARNs system? Additionally, how does the NEAR community plan to expand the capabilities of (L)Earn AI in the future?

This explanation of how XTrace private RAG is used for (L)Earn AI is fascinating! I love how the process involves a seamless collaboration between the LNC RAG, private RAG, and the LLM hosted on NEAR AI infrastructure. The fact that learners can provide feedback and earn 4nLEARNs to improve the AI is a great incentive to encourage community engagement. I'm curious to know more about how the feedback mechanism works and how it impacts the AI's performance over time. Can anyone share more insights on this?

Fascinating to see how RAG is bridging the gap between large language models and external knowledge bases! The concept of dynamically updating an LLM's knowledge base without retraining is a game-changer, especially in fields where data is constantly evolving. I'm curious to know more about the scalability of RAG systems – how do they handle the sheer volume of documents in the vector database, and what kind of computational power is required to perform semantic similarity calculations in real-time? Also, what kinds of applications do you envision for RAG, beyond just improving AI agent responses? Could we see RAG being used in areas like sentiment analysis, information retrieval, or even creative content generation?

This concept of using blockchain for agent memory is a game-changer for AI collective intelligence. The ability to store knowledge securely and allow agents to inherit from each other while maintaining ownership and privacy is crucial for collaboration and problem-solving. I'm particularly intrigued by the sandbox testing feature, which enables developers to detect and mitigate prompt injection attacks before deployment. The idea of incentivizing adversarial testing through smart contracts is innovative and could lead to more resilient AI systems. I do wonder, however, how XTrace plans to tackle the potential scalability issues that come with storing vast amounts of agent knowledge on a blockchain. Will there be any limitations on the types of data that can be stored, or plans for data compression and optimization?

This is a game-changer for the NEAR community! I love how (L)Earn AI seamlessly integrates with XTrace's private RAG to provide personalized learning experiences. The fact that learners can give feedback and earn 4nLEARNs to improve the AI's performance is a brilliant incentivization strategy. I'm curious to know more about the LLM friendly format required for the learning materials – is there a specific template or guideline for creators to follow? Also, what kind of feedback is most valuable for the AI's improvement, and how will it be utilized to enhance the learning experience?

I'm really excited about the potential of XTrace to revolutionize the way we interact with AI agents. The idea of having a personalized AI agent that can learn from my data without compromising my privacy is a game-changer. I'm particularly interested in the granular access control feature, which ensures that only authorized AI agents can access specific data. This level of control is crucial in today's data-driven world. However, I do wonder how XTrace plans to address the issue of data quality and accuracy, especially when aggregating data from multiple sources. How will the system ensure that the insights generated are trustworthy and reliable?

Fascinating breakdown of an AI agent's components! I'm struck by the importance of the 'knowledge' component, which seems to be the key to an agent's ability to make informed decisions. I wonder, though, how these agents handle situations where the 'knowledge' base is incomplete or outdated? Do they have mechanisms in place to learn and adapt from new experiences, or would they rely on human intervention to update their knowledge? Also, I'd love to see more exploration of the ethical implications of agents operating with domain-specific expertise – how do we ensure they're making decisions that align with human values and principles?

Fascinating article! I especially appreciate the emphasis on defining the purpose of the AI agent upfront. It's crucial to establish clear goals and tasks to ensure the agent stays focused and effective. I'm curious, though – what kind of domain-specific data is ideal for gathering and structuring knowledge? Are there any specific tools or techniques recommended for organizing this data in a way that the agent can efficiently utilize? Additionally, how do you balance the need for a tailored AI model with the potential risks of bias and limited generalizability?

This breakdown of an AI agent's components is fascinating! I'm particularly intrigued by the distinction between Intelligence and Knowledge. It's clear that the LLM is the brain of the operation, but the Knowledge component raises questions about the kind of domain-specific expertise we're feeding these agents. Are we inadvertently perpetuating biases or gaps in understanding by relying on historical data? How can we ensure that these agents don't simply replicate existing flaws, but instead learn to identify and correct them? I'd love to hear more about how these agents are being designed to tackle these complex issues.

This article provides a fascinating insight into the capabilities of Retrieval-Augmented Generation (RAG) in enhancing large language models. I'm impressed by how RAG's ability to dynamically update an LLM's knowledge base without retraining can address knowledge gaps in the training data. What I'd like to know more about is how RAG handles ambiguous or outdated information in the external knowledge bases. How does it ensure the accuracy and relevance of the retrieved document chunks, especially in fast-paced fields like science or current events? The potential applications of RAG are vast, and I'm excited to see how it'll be used to improve AI agents in real-world scenarios.

Fascinating approach to secure AI agent knowledge and memory using blockchain! I'm particularly intrigued by the potential for XTrace to enable seamless collaboration between agents while maintaining data ownership and privacy. The idea of a shared memory system that allows agents to collectively improve decision-making and problem-solving capabilities is a game-changer. I'm curious to know more about how the smart contract-based guardrails would work in practice, and how the bounty system would incentivize adversarial testing. Additionally, I wonder if there are plans to integrate XTrace with existing AI frameworks or develop it as a standalone solution. Overall, XTrace has the potential to revolutionize the development and deployment of autonomous agents, and I'm excited to see where this technology takes us!

Fascinating insight into creating a personalized AI agent with XTrace! I'm particularly intrigued by the importance of defining the purpose of the agent, as it sets the tone for the entire development process. I wonder, how do you ensure that the chosen AI model accurately reflects the purpose and goals of the agent, especially in domains with nuanced or subjective requirements? Additionally, what kind of domain-specific data is most effective in structuring knowledge for the agent's efficient use? Looking forward to exploring these questions further and unlocking the full potential of personalized AI agents!